Here's something fun! @soycamo and I performed at DorkbotPDX's Open Mic Surgery. She brought the beats, I brought the visuals.

Watch a video of our performance.

I was lucky enough to receive a Leap Motion Controller. It acts like a short-range Kinect for your hands, tracking the position of each individual finger. The Leap's sensors are fast, and spookily accurate. I love it.

For my first "real" Leap project, I planned to use the device on stage, and sculpt a block of virtual clay. Initially I was targeting Flash + Stage3D (aka OpenGL), using a Python script to grab the Leap data and send it to Flash through a socket.

In the end, I switched to Cinder, on the assumption that its performance would be superior. In retrospect, Flash + Stage3D would have performed fine. Even in Cinder, my laptop only managed 15-20 FPS. Instead of switching to the native C++ Leap library, I kept the (slower?) Python socket, which may have been unwise. It was also my first Cinder project, so progress was slow.

Still, I regret nothing!!! The performance was a blast. A momentary "ooooohhh" passed through the crowd when they saw the Leap in action. I was concerned about stage lights interfering with the Leap's sensors, but the Leap was unaffected by the lights.

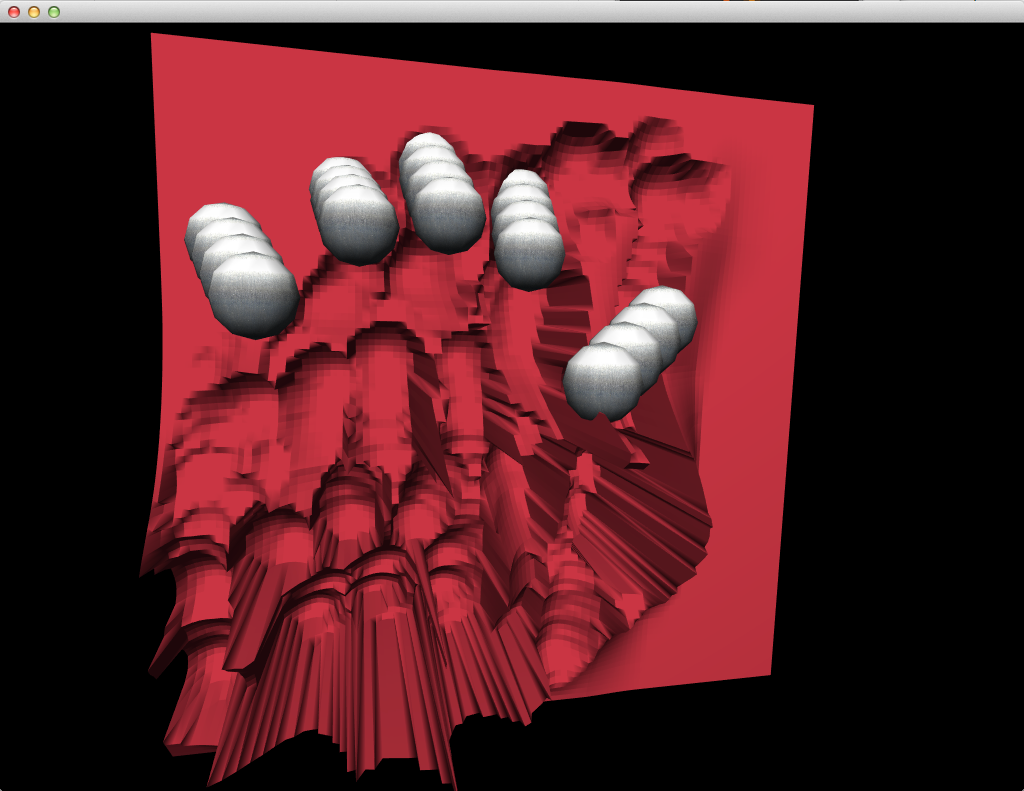

For the surface of the clay, I'm storing a heightmap in memory. I treat the tips of the fingers as spheres. When the spheres move below the heightmap, some of the height is distributed to neighboring cells. Then the clay is drawn as a triangle mesh. Overall, the effect is a bit rough, but it definitely conveys the feeling of sculpting solid material.

Download the source code. I included a few sample images and videos to get things rolling:

- Copy the Leap Python SDK files to the /python folder. (Unfortunately, I cannot provide these files. Registered Leap developers can download them from the Leap website.)

- Start the Python server: ./python/server.py

- Open and run the project in Xcode: xcode/Clay.xcodeproj

- Keys: 1 starts the next background video, ` stops all video. 2 toggles silly matrix transforms. 3 toggles liquid mode. 4 resets the surface of the clay. 0 selects a random image, [ and ] display the prev/next image.